In my last blog post I mentioned that the next topic is about Azure Policy in combination with Azure Arc enabled Kubernetes.

I decided to write about Azure Policy for Kubernetes instead covering Azure Kubernetes Service and Azure Arc enabled Kubernetes.

As Azure Policy for Kubernetes is based on the Open Policy Agent Gatekeeper implementation, I will also highlight the difference between the native Gatekeeper and the Azure Policy for Kubernetes implementation.

-> https://docs.microsoft.com/en-us/azure/governance/policy/concepts/policy-for-kubernetes

-> https://github.com/open-policy-agent/gatekeeper

Azure Policy for Kubernetes vs. Gatekeeper

First, let us look at Gatekeeper itself. When you install Gatekeeper into your Kubernetes cluster you have two pods and a validating admission controller afterwards.

The two pods are gatekeeper-audit and gatekeeper-controller-manager. gatekeeper-audit provides the audit functionality, if a pod / container violates a policy defined via a constraint template and enabled by a constraint. It does not matter which enforcement action deny, the default one, or dryrun is defined in the constraint. The gatekeeper-audit pod always checks for violations.

Only when the enforcement action is set to deny the gatekeeper-controller-manager gets active and you will notice him.

Whenever your deployment fulfills all policy conditions the request is then passed over for deployment. Otherwise it gets denied by the gatekeeper-controller-manager as all requests are forwarded by the validating admission controller to the gatekeeper-controller-manager for validation. Except the Kubernetes namespaces you exempt from Gatekeeper.

Azure Policy for Kubernetes has nearly the same architecture as it is using Gatekeeper v3 under the hood. You get the gatekeeper-controller-manager and the validating admission controller, but you do not get the gatekeeper-audit pod. gatekeeper-audit or the functionality is part of the gatekeeper-controller-manager. Depending on the arguments you define in the Kubernetes template you can also run the native Gatekeeper implementation with only one pod as Azure Policy for Kubernetes does.

Then you have two azure-policy pods in the kube-system namespace. Those pods have several responsibilities.

First, deploying automatically the defined constraint templates and constraints onto the Kubernetes cluster, when the corresponding Azure Policy assignment targets the Kubernetes cluster. Furthermore, the reporting functionality back to Azure Policy for detected violations. So, you can check the compliance state of the Kubernetes cluster in the Azure portal.

This kind of compliance check can also be implemented with Gatekeeper in combination with Azure Log Analytics and Azure Monitor Workbooks. I will cover this topic in a future blog post.

The current downside of Azure Policy for Kubernetes is the limitation to built-in policies. Thus, said you cannot use custom policies with custom constraint templates and constraints as with the native Gatekeeper implementation currently.

In the end you get a set of basic pre-defined policies, but those are limiting the use of Azure Policy for Kubernetes just for proof of concepts right now.

I need to highlight here that Azure Policy for Kubernetes is in preview as of writing this blog post.

In summary have a quick look at the following table to compare pro and cons of both solutions.

| Azure Policy for Kubernetes | Gatekeeper v3 |

| + Managed solution

+ Uses Gatekeeper v3 + Automatic compliance reporting via Azure Policy + Automatic rollout of constraint templates and constraints via Azure Policy – Limited to built-in policies |

+ Full flexibility

– Non-managed solution |

Now let us get started on how to enable Azure Policy for Kubernetes on AKS and Azure Arc enabled Kubernetes.

Enabling Azure Policy for Kubernetes on Azure Kubernetes Service

The first thing you need to do is onboarding you Azure subscription into the preview and install the AKS Azure CLI preview extension.

> az provider register --namespace Microsoft.ContainerService > az provider register --namespace Microsoft.PolicyInsights > az feature register --namespace Microsoft.ContainerService --name AKS-AzurePolicyAutoApprove > az provider register -n Microsoft.ContainerService > az extension add --name aks-preview

Afterwards you enable Azure Policy for Kubernetes on an AKS cluster with just a click in the Azure portal.

Azure CLI, ARM templates and Terraform are also possible ways to do so.

> az aks enable-addons -g azst-aks2 -n azst-aks2 --addons azure-policy

You should now see the following pods in your AKS cluster indicating a successful installation.

> kubectl get pods -n gatekeeper-system NAME READY STATUS RESTARTS AGE gatekeeper-controller-manager-6f6bf8fdbb-5dn7f 1/1 Running 0 7h20m > kubectl get pods -n kube-system -l=app=azure-policy NAME READY STATUS RESTARTS AGE azure-policy-77fb8746cd-7ndfd 1/1 Running 0 7h22m > kubectl get pods -n kube-system -l=app=azure-policy-webhook NAME READY STATUS RESTARTS AGE azure-policy-webhook-5c47b5985d-5pltd 1/1 Running 0 7h23m

Enabling Azure Policy for Kubernetes on Azure Arc enabled Kubernetes

First step is the onboarding of your Kubernetes cluster to Azure Arc.

-> https://www.danielstechblog.io/connect-kind-with-azure-arc-enabled-kubernetes/

Afterwards you run the following set of commands enabling Azure Policy for Kubernetes on an Azure Arc enabled Kubernetes cluster.

> az ad sp create-for-rbac --name "KinD" --skip-assignment

> az role assignment create --role "Policy Insights Data Writer (Preview)" \

--assignee "${CLIENT_ID}" \

--scope "${RESOURCE_ID_AZURE_ARC_ENABLED_KUBERNETES_CLUSTER}" --verbose

> helm repo add azure-policy https://raw.githubusercontent.com/Azure/azure-policy/master/extensions/policy-addon-kubernetes/helm-charts

> helm upgrade azure-policy-addon azure-policy/azure-policy-addon-arc-clusters --install --namespace kube-system \

--set azurepolicy.env.resourceid=${RESOURCE_ID_AZURE_ARC_ENABLED_KUBERNETES_CLUSTER} \

--set azurepolicy.env.clientid=${CLIENT_ID} \

--set azurepolicy.env.clientsecret='${CLIENT_SECRET}' \

--set azurepolicy.env.tenantid=${TENANT_ID}

You should see the following pods in your Azure Arc enabled Kubernetes cluster indicating a successful installation.

> kubectl get pods -n gatekeeper-system NAME READY STATUS RESTARTS AGE gatekeeper-controller-manager-7c95cb4875-x5sqx 1/1 Running 1 40m > kubectl get pods -n kube-system -l=app=azure-policy NAME READY STATUS RESTARTS AGE azure-policy-7b566d9574-4t8rd 1/1 Running 0 40m > kubectl get pods -n kube-system -l=app=azure-policy-webhook NAME READY STATUS RESTARTS AGE azure-policy-webhook-795b8fbb7c-zrbrb 1/1 Running 0 40m

Now we are ready to enroll some policies onto the Kubernetes clusters.

Policy rollout via Azure Policy

The built-in policies are all under the category Kubernetes in Azure Policy.

During the rollout you can specify to disable or enable the policy assignment. This kind of setting controls how the enforcement is done by Gatekeeper. Disable means dryrun, thus only violation reporting is active, and enable means deny the complete enforcement action.

In my example I am rolling out the following three policies.

- Ensure only allowed container images in Kubernetes cluster

- Do not allow privileged containers in Kubernetes cluster

- Enforce labels on pods in Kubernetes cluster

The first one is the one which is disabled. So, we only get the compliance reporting. The other ones are fully enforcing the policy definition.

On my AKS cluster I deployed a pod in a namespace, before applying those policies mentioned above. I have done the same on the Azure Arc enabled Kubernetes cluster which is my KinD single node cluster.

The table shows you, if the pods are compliant or not to the policies and what results we are expecting by the Azure Policy compliance report.

| Policy | Pod settings – AKS | Pod settings – Azure Arc | Expected Azure Policy compliance report |

| Ensure only allowed container images in Kubernetes cluster

ACR images are allowed |

Compliant

Uses a container image from ACR. |

Non-compliant

Uses a container image from Docker Hub. |

Compliant for AKS

Non-compliant for Azure Arc |

| Do not allow privileged containers in Kubernetes cluster | Compliant | Non-compliant

Uses the setting privileged. |

Compliant for AKS

Non-compliant for Azure Arc |

| Enforce labels on pods in Kubernetes cluster

aks |

Non-compliant

aksengine: “true” |

Compliant

azurearc: “true” |

Non-compliant for AKS

Compliant for Azure Arc |

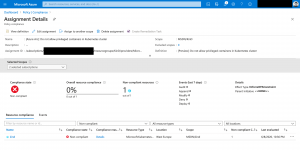

As we see it in the screenshot, we get our expected compliance reporting.

When we drill down, we also get information which pod is violating the policy.

Trying to rollout a new deployment that violates a policy results in a deny message.

> kubectl describe replicasets go-webapp-5f588567dc

...

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedCreate 3s (x13 over 24s) replicaset-controller Error creating: admission webhook "validation.gatekeeper.sh" denied the request: [denied by azurepolicy-pod-enforce-labels-67a8cb9f9a53735e786920d6450a22690cab78316b26d5dab64044ceedcaed2e] you must provide labels: {"aks"}

Those deny messages are only available in the replica set and not in the deployment itself. As the deployment creates the replica set which controls the pods.

Summary

Azure Policy for Kubernetes is a solution you should give a try to get familiar with. As it is a managed solution and does the constraint template and constraint deployment automatically as well the violation reporting. The current downside is the restriction to built-in policies.

For production use I recommend the native Gatekeeper implementation as you get the most flexible solution. But it is not a one-way street as you can onboard your constraint templates and constraints with minimal effort to Azure Policy for Kubernetes, when custom policies are hopefully allowed in a future iteration.