In my last blog post I have shown you my local Kubernetes setup with KinD.

I mentioned also Istio and today we walk through the configuration to get it running on Kubernetes in Docker.

As prerequisite I recommend reading my previous blog post before you continue with this one.

-> https://www.danielstechblog.io/local-kubernetes-setup-with-kind/

I made a configuration decision for KinD in this case using the extraPortMappings option to pin port 80, 443 and 15021 onto specific ports in the Kubernetes NodePort range.

...

extraPortMappings:

- containerPort: 30000

hostPort: 80

listenAddress: "127.0.0.1"

protocol: TCP

- containerPort: 30001

hostPort: 443

listenAddress: "127.0.0.1"

protocol: TCP

- containerPort: 30002

hostPort: 15021

listenAddress: "127.0.0.1"

protocol: TCP

Let us start now with the configuration settings I am using for Istio on a KinD single node cluster.

...

pilot:

enabled: true

k8s:

hpaSpec:

maxReplicas: 1

overlays:

- apiVersion: policy/v1beta1

kind: PodDisruptionBudget

name: istiod

patches:

- path: spec.minAvailable

value: 0

...

For the istiod component I am setting the HPA maxReplicas to 1 having enough spare capacity for my workloads.

A requirement then is to use an overlay overwriting the PodDisruptionBudget and set it to 0. Otherwise an Istio upgrade would not succeed as we never fulfill the PodDisruptionBudget.

...

ingressGateways:

- enabled: true

k8s:

hpaSpec:

maxReplicas: 1

nodeSelector:

ingress-ready: "true"

service:

type: NodePort

overlays:

- apiVersion: v1

kind: Service

name: istio-ingressgateway

patches:

- path: spec.ports

value:

- name: status-port

port: 15021

targetPort: 15021

nodePort: 30002

- name: http2

port: 80

targetPort: 8080

nodePort: 30000

- name: https

port: 443

targetPort: 8443

nodePort: 30001

- apiVersion: policy/v1beta1

kind: PodDisruptionBudget

name: istio-ingressgateway

patches:

- path: spec.minAvailable

value: 0

...

Next stop is the Istio ingress gateway configuration as seen above. Same as for istiod I am setting the HPA maxReplicas to 1 and adjusting the PodDisruptionBudget.

Furthermore, I specify a nodeSelector ensuring, in case of a KinD multi node cluster, that the Istio ingress gateway always runs on a particular node.

Per default the ingress gateway uses the service type LoadBalancer which do not work on KinD as an SLB (Software Load Balancer) implementation is missing.

Therefore, we must switch to the type NodePort to expose the ingress gateway on the localhost / host interface.

That is all and with running the istioctl install -f install-istio.yaml command we kick off the Istio deployment.

After a couple of minutes Istio got successfully installed.

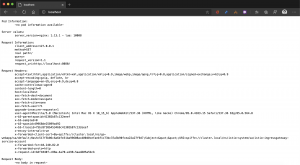

> kubectl get pods NAME READY STATUS RESTARTS AGE grafana-b54bb57b9-n7f7h 1/1 Running 0 57s istio-cni-node-ct2lj 2/2 Running 0 57s istio-ingressgateway-6b74559cf9-7pw24 1/1 Running 0 57s istiod-59b9dd8b9c-gv6xc 1/1 Running 0 76s kiali-d45468dc4-mbjv6 1/1 Running 0 56s prometheus-6477cfb669-bsqx7 2/2 Running 0 56s

The KinD setup is now ready for your workloads. I already deployed an example application and can reach the app via http://localhost.

I can also verify the health status of the Istio ingress gateway using the endpoint http://localhost:15021/healthz/ready.

> curl -sI http://localhost:15021/healthz/ready HTTP/1.1 200 OK date: Fri, 17 Jul 2020 19:06:19 GMT x-envoy-upstream-service-time: 1 server: envoy transfer-encoding: chunked

As usual you find the Istio template in my GitHub repository.

-> https://github.com/neumanndaniel/kubernetes/blob/master/kind/install-istio.yaml