In my last blog post I walked you through the setup of the rate limiting reference implementation: The Envoy Proxy ratelimit service.

Our today’s topic is about connecting the Istio ingress gateway to the ratelimit service. The first step for us is the Istio documentation.

-> https://istio.io/latest/docs/tasks/policy-enforcement/rate-limit/

Connect Istio with the ratelimit service

Currently, the configuration of rate limiting in Istio is tied to the EnvoyFilter object. There is no abstracting resource available which makes it quite difficult to implement it. However, with the EnvoyFilter object we have access to all the goodness the Envoy API provides.

Let us start with the first Envoy filter that connects the Istio ingress gateway to the ratelimit service. This does not apply rate limiting to inbound traffic.

apiVersion: networking.istio.io/v1alpha3

kind: EnvoyFilter

metadata:

name: filter-ratelimit

namespace: istio-system

spec:

workloadSelector:

labels:

istio: ingressgateway

configPatches:

- applyTo: HTTP_FILTER

match:

context: GATEWAY

listener:

filterChain:

filter:

name: "envoy.filters.network.http_connection_manager"

subFilter:

name: "envoy.filters.http.router"

patch:

operation: INSERT_BEFORE

value:

name: envoy.filters.http.ratelimit

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.http.ratelimit.v3.RateLimit

domain: ratelimit

failure_mode_deny: false

timeout: 25ms

rate_limit_service:

grpc_service:

envoy_grpc:

cluster_name: rate_limit_cluster

transport_api_version: V3

- applyTo: CLUSTER

match:

cluster:

service: ratelimit.ratelimit.svc.cluster.local

patch:

operation: ADD

value:

name: rate_limit_cluster

type: STRICT_DNS

connect_timeout: 25ms

lb_policy: ROUND_ROBIN

http2_protocol_options: {}

load_assignment:

cluster_name: rate_limit_cluster

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address:

address: ratelimit.ratelimit.svc.cluster.local

port_value: 8081

I do not walk you through all the lines, only through the important ones.

...

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.http.ratelimit.v3.RateLimit

domain: ratelimit

failure_mode_deny: false

timeout: 25ms

rate_limit_service:

grpc_service:

envoy_grpc:

cluster_name: rate_limit_cluster

transport_api_version: V3

...

First the value for domain must match what you defined in the config map of the ratelimit service.

apiVersion: v1

kind: ConfigMap

metadata:

name: ratelimit-config

namespace: ratelimit

data:

config.yaml: |-

domain: ratelimit

...

The value for failure_mode_deny can be either set to false or true. If this value is set to true, the Istio ingress gateway returns an HTTP 500 error when it cannot reach the ratelimit service. This results in unavailability of your application. My recommendation, set the value to false ensuring the availability of your application.

The timeout value defines the time the ratelimit service needs to return a response on a request. It should not be set to high as otherwise your users will experience increased latency on their requests. Especially, when the ratelimit service is temporary unavailable. For Istio and the ratelimit service running on AKS and having the backing Azure Cache for Redis in the same Azure region as AKS I experienced that 25ms for the timeout is a reasonable value.

The last important value is cluster_name. Which provides the name we reference in the second patch of the Envoy filter.

...

- applyTo: CLUSTER

match:

cluster:

service: ratelimit.ratelimit.svc.cluster.local

patch:

operation: ADD

value:

name: rate_limit_cluster

type: STRICT_DNS

connect_timeout: 25ms

lb_policy: ROUND_ROBIN

http2_protocol_options: {}

load_assignment:

cluster_name: rate_limit_cluster

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address:

address: ratelimit.ratelimit.svc.cluster.local

port_value: 8081

Basically, we define the FQDN of the ratelimit service object and port the Istio ingress gateway then connects to.

Rate limit actions

The Istio ingress gateway is now connected to the ratelimit service. However, we still missing the rate limit actions that matches our ratelimit service config map configuration.

apiVersion: networking.istio.io/v1alpha3

kind: EnvoyFilter

metadata:

name: filter-ratelimit-svc

namespace: istio-system

spec:

workloadSelector:

labels:

istio: ingressgateway

configPatches:

- applyTo: VIRTUAL_HOST

match:

context: GATEWAY

routeConfiguration:

vhost:

name: "*.danielstechblog.de:80"

route:

action: ANY

patch:

operation: MERGE

value:

rate_limits:

- actions:

- request_headers:

header_name: ":authority"

descriptor_key: "HOST"

- actions:

- remote_address: {}

- actions:

- request_headers:

header_name: ":path"

descriptor_key: "PATH"

Again, I walk you through the important parts.

...

routeConfiguration:

vhost:

name: "*.danielstechblog.de:80"

route:

action: ANY

...

The routeConfiguration specifies the domain name and port the rate limit actions apply to.

...

value:

rate_limits:

- actions:

- request_headers:

header_name: ":authority"

descriptor_key: "HOST"

- actions:

- remote_address: {}

- actions:

- request_headers:

header_name: ":path"

descriptor_key: "PATH"

In this example configuration the rate limit actions apply to the domain name, the client IP, and the request path. This matches exactly our ratelimit service config map configuration.

...

descriptors:

- key: PATH

value: "/src-ip"

rate_limit:

unit: second

requests_per_unit: 1

- key: remote_address

rate_limit:

requests_per_unit: 10

unit: second

- key: HOST

value: "aks.danielstechblog.de"

rate_limit:

unit: second

requests_per_unit: 5

After applying the rate limit actions, we test the rate limiting.

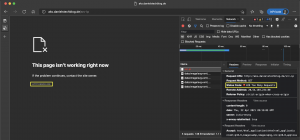

As seen in the screenshots I am hitting the rate limit when calling the path /src-ip more than once per second.

Summary

It is a bit tricky to get the configuration done correctly for the EnvoyFilter objects. But when you got around it you can use all the goodness the Envoy API provides. Thus, saying the Istio documentation is no longer your friend here. Instead, you should familiarize yourself with the Envoy documentation.

I added the Envoy filter YAML template to my GitHub repository and adjusted the setup script to include the template as well.

-> https://github.com/neumanndaniel/kubernetes/tree/master/envoy-ratelimit

So, what is next after the Istio ingress gateway got connected to the ratelimit service? Observability! Remember that statsd runs as a sidecar container together with the ratelimit service?

In the last blog post of this series, I will show you how to collect the Prometheus metrics of the ratelimit service with Azure Monitor for containers.