The Istio sidecar proxy uses Envoy and therefore supports two different rate limiting modes. A local one targeting only a single service and a global one targeting the entire service mesh.

The local rate limit implementation only requires Envoy itself without the need for a rate limit service. In contrast the global rate limit implementation requires a rate limit service as its backend.

Looking at Istio and Envoy there is a reference implementation available by the Envoy Proxy community: The Envoy Proxy ratelimit service.

-> https://github.com/envoyproxy/ratelimit

So, in today’s post I walk you through the setup of the Envoy Proxy ratelimit service using an Azure Cache for Redis as its backend storage.

First, we deploy the Azure Cache for Redis in our Azure subscription in the same region we have the Azure Kubernetes Service cluster running.

> az redis create --name ratelimit --resource-group ratelimit \ --location northeurope --sku Standard --vm-size c0

The choice here is the Standard SKU and size C0. It is the smallest Redis instance on Azure which offers an SLA of 99,9%. But you can also choose the Basic SKU.

The repo of the ratelimit service only offers a Docker Compose file and no Kubernetes template or Helm Chart. Therefore, we build the template ourselves.

So, how will our deployment look like?

Envoy Proxy ratelimit service deployment

The entire deployment consists of a namespace, a deployment, a service, a secret, a network policy, a peer authentication policy and two configuration maps.

-> https://github.com/neumanndaniel/kubernetes/tree/master/envoy-ratelimit

Let us focus on the deployment template. It will roll out the ratelimit service and a sidecar container exporting Prometheus metrics.

I have chosen the following configuration for the ratelimit service which is passed over as environment variables.

...

env:

- name: USE_STATSD

value: "true"

- name: STATSD_HOST

value: "localhost"

- name: STATSD_PORT

value: "9125"

- name: LOG_FORMAT

value: "json"

- name: LOG_LEVEL

value: "debug"

- name: REDIS_SOCKET_TYPE

value: "tcp"

- name: REDIS_URL

valueFrom:

secretKeyRef:

name: redis

key: url

- name: REDIS_AUTH

valueFrom:

secretKeyRef:

name: redis

key: password

- name: REDIS_TLS

value: "true"

- name: REDIS_POOL_SIZE

value: "5"

- name: LOCAL_CACHE_SIZE_IN_BYTES # 25 MB local cache

value: "26214400"

- name: RUNTIME_ROOT

value: "/data"

- name: RUNTIME_SUBDIRECTORY

value: "runtime"

- name: RUNTIME_WATCH_ROOT

value: "false"

- name: RUNTIME_IGNOREDOTFILES

value: "true"

...

The first part is the configuration for exporting the rate limit metrics. We pass the statsd exporter configuration over as a configuration map object and use the default settings from the ratelimit service repo.

-> https://github.com/envoyproxy/ratelimit/blob/main/examples/prom-statsd-exporter/conf.yaml

- name: LOG_FORMAT value: "json" - name: LOG_LEVEL value: "debug"

I recommend setting the log format to json and for the introduction phase the debug log level.

Afterwards comes the Redis configuration where we only change the default values for enabling TLS and reducing the pool size from 10 to 5. The last setting is important not to exhaust the Azure Cache for Redis connection limit for our chosen SKU and size.

- name: REDIS_TLS value: "true" - name: REDIS_POOL_SIZE value: "5"

Another important setting is the local cache which per default is turned off. The local cache only stores information about already exhausted rate limits and reduces calls to the Redis backend.

- name: LOCAL_CACHE_SIZE_IN_BYTES # 25 MB local cache value: "26214400"

As our Redis in azure has 250 MB storage I am using 25 MB for the local cache size. Ten percent from Redis total storage amount.

Looking at the runtime configuration we specify a different root and sub directory. Bu the important settings are RUNTIME_WATCH_ROOT and RUNTIME_IGNOREDOTFILES. First one should be set to false and the last one to true. This guarantees the correct loading of our rate limit configuration which we again pass in via a configuration map.

apiVersion: v1

kind: ConfigMap

metadata:

name: ratelimit-config

namespace: ratelimit

data:

config.yaml: |-

domain: ratelimit

descriptors:

- key: PATH

value: "/src-ip"

rate_limit:

unit: second

requests_per_unit: 1

- key: remote_address

rate_limit:

requests_per_unit: 10

unit: second

- key: HOST

value: "aks.danielstechblog.de"

rate_limit:

unit: second

requests_per_unit: 5

In my rate limit configuration, I am using PATH, remote_address and HOST as rate limits. If you want, you can specify different config.yaml files in one configuration map to separate different rate limit configurations from each other.

In our Kubernetes service object definition, we expose all ports. The three different ports of the ratelimit service and the two ports of the statsd exporter.

| Container | Port | Description |

| ratelimit | 8080 | healthcheck and json endpoint |

| ratelimit | 8081 | GRPC endpoint |

| ratelimit | 6070 | debug endpoint |

| statsd-exporter | 9102 | Prometheus metrics endpoint |

| statsd-exporter | 9125 | statsd endpoint |

Special configuration for Istio

Whether you want the ratelimit service to be part of the service mesh or not is a debatable point. I highly encourage you to include the ratelimit service in the service mesh. The Istio sidecar proxy provides insightful information when the Istio ingress gateway talks via GRPC with the ratelimit service. Especially when you run into errors. But this is part of the next blog post about connecting the Istio ingress gateway to the ratelimit service.

So, why do we need a peer authentication and network policy for the ratelimit service?

The issue is the GRPC protocol here. When you use a STRICT mTLS configuration in your service mesh you need another peer authentication policy. Otherwise, the ingress gateway cannot connect to the ratelimit service. This is a known issue in Istio, and it seems not to be fixed in the future.

apiVersion: "security.istio.io/v1beta1"

kind: "PeerAuthentication"

metadata:

name: "ratelimit"

namespace: "ratelimit"

spec:

selector:

matchLabels:

app: ratelimit

portLevelMtls:

8081:

mode: PERMISSIVE

Therefore, we use a namespace bound peer authentication policy setting the mTLS mode on the GRPC port to PERMISSIVE as seen above.

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: deny-all-inbound

namespace: ratelimit

spec:

podSelector: {}

policyTypes:

- Ingress

---

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-istio-ingressgateway

namespace: ratelimit

spec:

podSelector:

matchLabels:

app: ratelimit

policyTypes:

- Ingress

ingress:

- from:

- namespaceSelector: {}

podSelector:

matchLabels:

istio: ingressgateway

ports:

- port: 8081

Using the above network policy ensures that only the Istio ingress gateway can talk to our ratelimit service. I highly recommend making use of a network policy in that case.

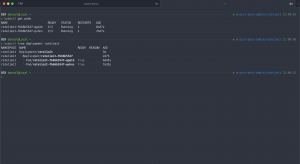

Envoy Proxy ratelimit service rollout

The rollout is done easily by running the setup.sh script.

-> https://github.com/neumanndaniel/kubernetes/blob/master/envoy-ratelimit/setup.sh

Before you run the script adjust the configuration map matching your rate limit configuration. Afterwards just specify the Azure Cache for Redis resource group and name as parameters.

> ./setup.sh ratelimit-redis istio-ratelimit

The ratelimit service should be up and running as seen in the screenshot.

Testing the ratelimit service functionality

Luckily, the project offers a GRPC client which we use to test the functionality of our ratelimit service configuration as well a REST API endpoint.

-> https://github.com/envoyproxy/ratelimit#grpc-client

-> https://github.com/envoyproxy/ratelimit#http-port

Let us start with the REST API endpoint. For that we need a test payload in JSON format.

{

"domain": "ratelimit",

"descriptors": [

{

"entries": [

{

"key": "remote_address",

"value": "127.0.0.1"

}

]

},

{

"entries": [

{

"key": "PATH",

"value": "/src-ip"

}

]

},

{

"entries": [

{

"key": "HOST",

"value": "aks.danielstechblog.de"

}

]

}

]

}

We then use kubectl port-forward connecting to one of the pods.

> kubectl port-forward ratelimit-fb66b5547-qpqtk 8080:8080

Calling the endpoint / healthcheck in our browser returns an OK.

Our test payload is sent via curl to the json endpoint.

> DATA=$(cat payload.json)

> curl --request POST --data-raw "$DATA" http://localhost:8080/json | jq .

---

{

"overallCode": "OK",

"statuses": [

{

"code": "OK",

"currentLimit": {

"requestsPerUnit": 10,

"unit": "SECOND"

},

"limitRemaining": 9,

"durationUntilReset": "1s"

},

{

"code": "OK",

"currentLimit": {

"requestsPerUnit": 1,

"unit": "SECOND"

},

"durationUntilReset": "1s"

},

{

"code": "OK",

"currentLimit": {

"requestsPerUnit": 5,

"unit": "SECOND"

},

"limitRemaining": 4,

"durationUntilReset": "1s"

}

]

}

Now let us connect to the GRPC endpoint and talk to the ratelimit service.

> kubectl port-forward ratelimit-fb66b5547-qvhns 8081:8081

> ./client -dial_string localhost:8081 -domain ratelimit -descriptors PATH=/src-ip

---

domain: ratelimit

descriptors: [ <key=PATH, value=/src-ip> ]

response: overall_code:OK statuses:{code:OK current_limit:{requests_per_unit:1 unit:SECOND} duration_until_reset:{seconds:1}}

Also, the GRPC endpoint looks good and our ratelimit service is fully operational.

Summary

It takes a bit of an effort to get the reference implementation of a rate limit service for Envoy up and running. But it is worthwhile the effort as you get a good performing rate limit service for your Istio service mesh implementation.

In the next blog post I walk you through the setup connecting the Istio ingress gateway to the ratelimit service.