In the past, I have written two blog posts about how to run untrusted workloads on Azure Kubernetes Service.

-> https://www.danielstechblog.io/running-gvisor-on-azure-kubernetes-service-for-sandboxing-containers/

-> https://www.danielstechblog.io/using-kata-containers-on-azure-kubernetes-service-for-sandboxing-containers/

Today, I walk you through how you gather log data of an untrusted workload isolated by Kata Containers with Fluent Bit. When you hear isolated, it always comes to mind that only one pattern works to gather log data: the sidecar pattern.

Fluent Bit would run as a sidecar in every isolated pod to ensure the logging capability. Furthermore, you must ensure that your application writes stdout/stderr to a place where the sidecar container can read it or use Fluent Bit’s HTTP ingestion endpoint instead. The latter would be another solution to not have a sidecar container in every isolated pod.

Both solutions have a lot of overhead for gathering log data from untrusted workloads isolated by Kata Containers. Fortunately, Kata Containers integrates well into containerd with Kubernetes via the Shim V2 API.

Hence, we continue to use the existing Fluent Bit daemon set installation on our Azure Kubernetes Service cluster. Starting an untrusted workload does not differ from a trusted workload in the context of Fluent Bit. Log files of the different containers in a pod run by Kata Containers are stored under /var/log/containers on the Kubernetes node. An already in place Fluent Bit installation picks up new log data and ingests that log data to the logging backend.

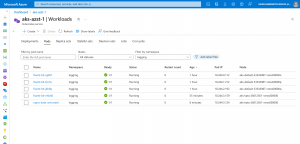

Let us look at one of the Kubernetes nodes that hosts the untrusted workload.

How does it work?

I ran the following command to add a Kata Containers node pool to the Azure Kubernetes Service cluster.

❯ AKS_CLUSTER_RG="rg-azst-1" ❯ AKS_CLUSTER_NAME="aks-azst-1" ❯ az aks nodepool add --cluster-name $AKS_CLUSTER_NAME --resource-group $AKS_CLUSTER_RG \ --name kata --os-sku mariner --workload-runtime KataMshvVmIsolation --node-vm-size Standard_D4s_v3 \ --node-taints kata=enabled:NoSchedule --labels kata=enabled --node-count 1 --zones 1 2 3

-> https://learn.microsoft.com/en-us/azure/aks/use-pod-sandboxing?WT.mc_id=AZ-MVP-5000119

After successfully deploying the Kata Containers node pool, we deploy an untrusted workload to this node pool.

apiVersion: v1

kind: Pod

metadata:

name: nginx-kata-untrusted

spec:

containers:

- name: nginx-kata-untrusted

image: nginx

runtimeClassName: kata-mshv-vm-isolation

tolerations:

- key: kata

operator: Equal

value: "enabled"

effect: NoSchedule

nodeSelector:

kata: enabled

We then use the kubectl debug command to examine the Kubernetes node’s container runtime configuration.

❯ kubectl debug node/aks-kata-26012561-vmss000001 -it --image=ubuntu

The Kubernetes node’s file system gets mounted under /host into the debug pod. Looking at the containerd configuration, we see the Kata Containers configuration.

❯ cat /host/etc/containerd/config.toml

version = 2

oom_score = 0

[plugins."io.containerd.grpc.v1.cri"]

sandbox_image = "mcr.microsoft.com/oss/kubernetes/pause:3.6"

[plugins."io.containerd.grpc.v1.cri".containerd]

default_runtime_name = "runc"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]

runtime_type = "io.containerd.runc.v2"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

BinaryName = "/usr/bin/runc"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.untrusted]

runtime_type = "io.containerd.runc.v2"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.untrusted.options]

BinaryName = "/usr/bin/runc"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.kata]

runtime_type = "io.containerd.kata.v2"

[plugins."io.containerd.grpc.v1.cri".registry]

config_path = "/etc/containerd/certs.d"

[plugins."io.containerd.grpc.v1.cri".registry.headers]

X-Meta-Source-Client = ["azure/aks"]

[metrics]

address = "0.0.0.0:10257"

By checking the runtime class kata-mshv-vm-isolation, we get the handler reference that points to the runtime kata matching the containerd configuration.

❯ kubectl get runtimeclasses.node.k8s.io kata-mshv-vm-isolation -o yaml

apiVersion: node.k8s.io/v1

handler: kata

kind: RuntimeClass

metadata:

labels:

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/cluster-service: "true"

name: kata-mshv-vm-isolation

scheduling:

nodeSelector:

kubernetes.azure.com/kata-mshv-vm-isolation: "true"

For the following command, we need the container id of our untrusted workload.

❯ kubectl get pods nginx-kata-untrusted -o json | jq '.status.containerStatuses[].containerID' -r | cut -d '/' -f3 c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d

As we used the kubectl debug command for our debug pod, the pod is not privileged, and we cannot use nsenter to run commands out of the debug pod on the Kubernetes node itself. Instead, we use the run command functionality for Azure Virtual Machine Scale Sets to retrieve the information.

❯ AKS_CLUSTER_RG="rg-aks-azst-1-nodes"

❯ AKS_CLUSTER_VMSS="aks-kata-26012561-vmss"

❯ AKS_CLUSTER_VMSS_INSTANCE=0

❯ az vmss run-command invoke -g $AKS_CLUSTER_RG -n $AKS_CLUSTER_VMSS --command-id RunShellScript \

--instance-id $AKS_CLUSTER_VMSS_INSTANCE --scripts "systemctl status containerd | grep c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d"

{

"value": [

{

"code": "ProvisioningState/succeeded",

"displayStatus": "Provisioning succeeded",

"level": "Info",

"message": "Enable succeeded:

[stdout]

Sep 26 20:23:38 aks-kata-26012561-vmss000000 containerd[1392]: time=\"2023-09-26T20:23:38.736319554Z\" level=info msg=\"CreateContainer within sandbox \\\"a49dceacf12b7786e9ff93eecf9c17a0c52f8d101975503acef7b4ecc8d9e06c\\\" for &ContainerMetadata{Name:nginx-kata-untrusted,Attempt:0,} returns container id \\\"c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d\\\"\"

Sep 26 20:23:38 aks-kata-26012561-vmss000000 containerd[1392]: time=\"2023-09-26T20:23:38.737003061Z\" level=info msg=\"StartContainer for \\\"c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d\\\"\"

Sep 26 20:23:38 aks-kata-26012561-vmss000000 containerd[1392]: time=\"2023-09-26T20:23:38.811806460Z\" level=info msg=\"StartContainer for \\\"c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d\\\" returns successfully\"

[stderr]

",

"time": null

}

]

}

The command above returns information from the containerd status showing that the untrusted workload has been scheduled and started correctly.

With the following command, we check if the untrusted workload runs in the Kata Containers sandbox.

❯ az vmss run-command invoke -g $AKS_CLUSTER_RG -n $AKS_CLUSTER_VMSS --command-id RunShellScript \

--instance-id $AKS_CLUSTER_VMSS_INSTANCE --scripts "systemctl list-units | grep kata | grep c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d"

{

"value": [

{

"code": "ProvisioningState/succeeded",

"displayStatus": "Provisioning succeeded",

"level": "Info",

"message": "Enable succeeded:

[stdout]

15d42cb3b4c287-hostname

run-kata\\x2dcontainers-shared-sandboxes-a49dceacf12b7786e9ff93eecf9c17a0c52f8d101975503acef7b4ecc8d9e06c-mounts-c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d\\x2dae2fbc5dd0f923ed\\x2dtermination\\x2dlog.mount loaded active mounted /run/kata-containers/shared/sandboxes/a49dceacf12b7786e9ff93eecf9c17a0c52f8d101975503acef7b4ecc8d9e06c/mounts/c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d-ae2fbc5dd0f923ed-termination-log

...

run-kata\\x2dcontainers-shared-sandboxes-a49dceacf12b7786e9ff93eecf9c17a0c52f8d101975503acef7b4ecc8d9e06c-shared-c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d\\x2dc9c1c86332ee8d01\\x2dhosts.mount loaded active mounted /run/kata-containers/shared/sandboxes/a49dceacf12b7786e9ff93eecf9c17a0c52f8d101975503acef7b4ecc8d9e06c/shared/c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d-c9c1c86332ee8d01-hosts

[stderr]

",

"time": null

}

]

}

In the output, we see the key strings run-kata as well /run/kata-containers. As mentioned earlier, we find the logs of a Kata Containers sandboxed pod under the standard log path for container logs on the Kubernetes node, which is /var/log/containers.

Again, we use the debug pod to examine the log file path.

❯ ls -ahl /host/var/log/containers | grep c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d lrwxrwxrwx 1 root root 106 Sep 26 20:23 nginx-kata-untrusted_logging_nginx-kata-untrusted-c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d.log -> /var/log/pods/logging_nginx-kata-untrusted_5d775411-fc5c-4a96-bfd6-f5fc3c01194d/nginx-kata-untrusted/0.log

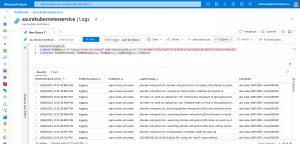

Finally, we run the following KQL query on the Azure Log Analytics workspace, retrieving the logs of the untrusted workload.

ContainerLogV2_CL | where PodName_s == "nginx-kata-untrusted" and ContainerId_s == "c5fddf80b5f758af8d267477a48397d11b1b98bbfd0f304512fa959f38b88e9d" | project TimeGenerated, PodNamespace_s, PodName_s, LogMessage_s, Computer

Summary

Using Kata Containers on Azure Kubernetes Service lets you isolate untrusted workloads and ensures at the same time by integrating into containerd with Kubernetes via the Shim V2 API that the existing logging solution does not need any additional or specific configuration to pick up the logs from an untrusted workload.

You can find the example pod template in my GitHub repository.

-> https://github.com/neumanndaniel/kubernetes/tree/master/kata-containers