In Azure Kubernetes Service Microsoft manages the AKS control plane (Kubernetes API server, scheduler, etcd, etc.) for you. The AKS control plane interacts with the AKS nodes in your subscription via a secure connection that is established through the tunnelfront / aks-link component.

As you can run the AKS control plane within a free tier (SLO) or a paid tier (SLA) the tunnel component differs. For the free tier it is still the tunnelfront component compared to the paid tier with the aks-link component. In this blog post I am talking about the aks-link component using the AKS control plane with the paid tier (SLA) option.

The tunnel component runs in the kube-system namespace on your nodes.

> kubectl get pods -l app=aks-link -n kube-system NAME READY STATUS RESTARTS AGE aks-link-7dd7c4b96f-986vs 2/2 Running 0 7m22s aks-link-7dd7c4b96f-f5zr5 2/2 Running 0 7m22s

The issue

Let me tell you what happened today on one of our AKS clusters during a release of one of our microservices.

We received an error that we hit the timeout for calling the Istio webhook for the automatic sidecar injection. Istio uses a mutating webhook for the automatic sidecar injection.

[ReplicaSet/microservice]FailedCreate: Error creating: Internal error occurred: failed calling webhook 'sidecar-injector.istio.io': Post https://istiod.istio-system.svc:443/inject?timeout=30s: context deadline exceeded

Further investigation showed us that commands like kubectl get or describe run successfully. But kubectl logs runs into the typical timeout indicating at the first sight an issue with the control plane.

Error from server: Get https://aks-nodepool-12345678-vmss000001:10250/containerLogs/microservice/microservice-1234567890-ab123/microservice-container: dial tcp x.x.x.x:10250: i/o timeout

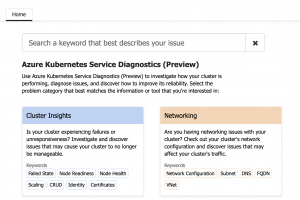

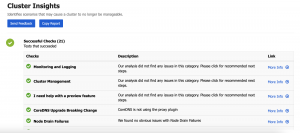

The resource health and the AKS Diagnostics showed no issues.

Beside a warning in the resource health blade that a planned control plane update happened in the morning.

Degraded : Updating Control Plane (Planned) At Tuesday, November 17, 2020, 6:22:41 AM GMT+1, the Azure monitoring system received the following information regarding your Azure Kubernetes Service (AKS): Your cluster was updating. You may see this message if you created your cluster for the first time or if there is a routine update on your cluster. Recommended Steps No action is required. You cluster was updating. The control plane is fully managed by AKS. To learn more about which features on AKS are fully managed, check the Support Policies documentation.

As the AKS cluster was fully operational, no customer impact and beside we could not deploy anything, we opened a support ticket and started our own recovery procedures.

After a support engineer was assigned, we quickly identified and mitigated the issue. We just needed to restart the aks-link component and therefore stopped our own recovery procedures.

kubectl rollout restart deployment aks-link -n kube-system

Summary

The takeaway in this situation is restarting the aks-link component when the following conditions are met.

- Resource health blade shows a healthy state or a warning

- AKS Diagnostics shows a healthy state

- kubectl commands like get and describe succeed as they only interact with the API server, control plane, itself

- kubectl commands like logs fail as the control plane needs to interact with the kubelet component on the nodes

- Deployments fail as the control plane needs to interact with the kubelet component on the nodes

The difference here is important. Calls only require the control plane succeed. But calls requiring interaction between the control plane and the nodes fail are a good indicator for an issue with the aks-link component.

Hence a restart of the aks-link component might solve this, and you do not need to reach out to the Azure Support.