Running applications on an Azure Kubernetes Service cluster which make a lot of outbound calls might led to a SNAT port exhaustion.

In today’s blog article I walk you through how to detect and mitigate a SNAT port exhaustion on AKS.

What is a SNAT port exhaustion?

It is important to know what a SNAT port exhaustion is to apply the correct mitigation.

SNAT, Source Network Address Translation, is used in AKS whenever an outbound call to an external address is made. Assuming you use AKS in its standard configuration, it enables IP masquerading for the backend VMSS instances of the load balancer.

SNAT ports get allocated for every outbound connection to the same destination IP and destination port. The default configuration of an AKS cluster provides 64.000 SNAT ports with a 30-minute ide timeout before idle connections are released. Furthermore, AKS uses automatic allocation for the SNAT ports based on the number of nodes the cluster uses.

| Number of nodes | Pre-allocated SNAT ports per node |

| 1-50 | 1.024 |

| 51-100 | 512 |

| 101-200 | 256 |

| 201-400 | 128 |

| 401-800 | 64 |

| 801-1.000 | 32 |

When running into a SNAT port exhaustion new outbound connections fail. So, it is important to detect a SNAT port exhaustion as early as possible.

How to detect a SNAT port exhaustion?

The guidance on Azure docs is well hidden.

-> https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-standard-diagnostics#how-do-i-check-my-snat-port-usage-and-allocation

-> https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-outbound-connections

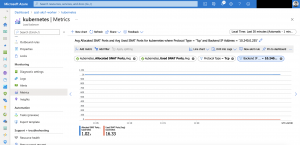

In the end you check the metrics of your load balancer of the AKS cluster. The metric SNAT Connection Count shows you when a SNAT port exhaustion happened. Important step here is to add the filter for the connection state and set it to failed.

You can filter even further on backend IP address level and apply splitting to it.

A value higher than 0 is a SNAT port exhaustion. As not all AKS nodes running into the port exhaustion at the same time we use the following metrics Allocated SNAT Ports and Used SNAT Ports identifying how bad the SNAT port exhaustion is on the affected node(s).

It is important using two filters here as otherwise we get an aggregated value which led to false assumptions. One for the protocol type set to TCP and the other one for the backend IP address set to the node that experiences the SNAT port exhaustion.

As seen above in the screenshot the used ports are not near nor equals the allocated ports. So, all good in this case. But when the used ports value gets near or equals the allocated ports value and SNAT Connection Count is also above 0 it is time for mitigating the issue.

Mitigating a SNAT port exhaustion

For AKS we have two different mitigation options that directly have an impact and solves the issue. The third option is more for a long-term strategy and an extension to the first one.

Our first option is the one which can be rolled out without architectural changes. We adjust the pre-allocated number of ports per node in the load balancer configuration. This disables the automatic allocation.

Per default in an AKS standard configuration the load balancer has one outbound public IP which results in 64.000 available ports. Each node in the cluster automatically gets a predefined number of ports assigned. The assignment is based on the number of nodes in the cluster as previously mentioned. Idle TCP connections get released after 30 minutes.

Assuming our AKS cluster uses the cluster autoscaler and can scale up to a maximum of 20 nodes. We then adjust the load balancer configuration that every node gets 3.000 ports pre-allocated compared to the default 1.024 without requiring an additional public IP. Larger values requiring additional outbound public IPs.

Furthermore, we set the TCP idle reset to 4 minutes releasing idle connections faster and free used SNAT ports.

An example Terraform configuration is shown below.

...

network_profile {

load_balancer_sku = "standard"

outbound_type = "loadBalancer"

load_balancer_profile {

outbound_ports_allocated = "3000"

idle_timeout_in_minutes = "4"

managed_outbound_ip_count = "1"

}

network_plugin = "azure"

network_policy = "calico"

dns_service_ip = "10.0.0.10"

docker_bridge_cidr = "172.17.0.1/16"

service_cidr = "10.0.0.0/16"

}

...

The second option assigns a dedicated public IP to every node in the cluster. On the one hand it increases the costs for large AKS clusters but on the other hand it totally mitigates the SNAT issue as SNAT is not used anymore. You find the guidance in the Azure docs.

At the beginning of this section, I mentioned a third option that complements the first one. When you use a lot of Azure PaaS services like Azure Database for PostgreSQL, Azure Cache for Redis or Azure Storage for instance you should use them with Azure Private Link. Using Azure PaaS services via their public endpoints consumes SNAT ports.

Making use of Azure Private Link reduces the SNAT port usage in your AKS cluster even further.

-> https://docs.microsoft.com/en-us/azure/private-link/private-link-overview

Summary

Long story short keep an eye on the SNAT port usage of your AKS cluster. Especially when a lot of outbound calls are made to external systems whether these are Azure PaaS services or not.

One last remark we have one more option for the SNAT port exhaustion mitigation: Azure Virtual Network NAT.

-> https://docs.microsoft.com/en-us/azure/virtual-network/nat-overview

I did not mention it as I could not find any information if this is supported by AKS. It should be but I am not 100% sure. So, let us see.