In one of my last blog posts I talked about running the Ambassador Kubernetes-native microservices API gateway on Azure Kubernetes Service. Specially to define and set the configuration option externalTrafficPolicy to Local on the Ambassador Kubernetes service object.

-> https://www.danielstechblog.io/running-ambassador-api-gateway-on-azure-kubernetes-service/

But have you ever asked yourself the question how the behavior of the Azure Load Balancer is in such a case? Does not matter we look at it now.

First let us have a look at two different Kubernetes service objects. One without and one with the configuration option externalTrafficPolicy set to Local. For that we run the kubectl describe command.

Ambassador service object – externalTrafficPolicy Cluster:

> kubectl describe svc ambassador

Name: ambassador

Namespace: default

Labels: service=ambassador

Annotations: kubectl.kubernetes.io/last-applied-configuration:

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{"service.beta.kubernetes.io/azure-dns-label-name":"azst-aks1-ambassador"},"...

service.beta.kubernetes.io/azure-dns-label-name: azst-aks1-ambassador

Selector: service=ambassador

Type: LoadBalancer

IP: 10.0.172.186

LoadBalancer Ingress: 40.69.29.19

Port: <unset> 80/TCP

TargetPort: 80/TCP

NodePort: <unset> 32474/TCP

Endpoints: 10.240.0.254:80,10.240.0.89:80,10.240.1.114:80

Session Affinity: None

External Traffic Policy: Cluster

Ambassador service object – externalTrafficPolicy Local:

> kubectl describe svc ambassador

Name: ambassador

Namespace: default

Labels: service=ambassador

Annotations: kubectl.kubernetes.io/last-applied-configuration:

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{"service.beta.kubernetes.io/azure-dns-label-name":"azst-aks1-ambassador"},"...

service.beta.kubernetes.io/azure-dns-label-name: azst-aks1-ambassador

Selector: service=ambassador

Type: LoadBalancer

IP: 10.0.172.186

LoadBalancer Ingress: 40.69.29.19

Port: <unset> 80/TCP

TargetPort: 80/TCP

NodePort: <unset> 32474/TCP

Endpoints: 10.240.0.254:80,10.240.0.89:80,10.240.1.114:80

Session Affinity: None

External Traffic Policy: Local

HealthCheck NodePort: 31019

As you can see there is more difference than the configuration setting externalTrafficPolicy between both service objects. In the case where externalTrafficPolicy is set to Local the service object gets a HealthCheck NodePort assigned. The HealthCheck NodePort is used by the Azure Load Balancer to identify, if the Ambassador pod on the node is running or not and mark the node as healthy or unhealthy.

But let us step back for a moment and looking at the standard behavior in case of a Kubernetes service object defaulting externalTrafficPolicy to Cluster.

When deploying a container application with a service object and externalTrafficPolicy set to Cluster, which you do not have to specify cause it is the default setting, every node in the cluster can serve traffic targeting this container application. The node is marked as healthy in the Azure Load Balancer backend pool. Even the node does not have a running pod of this application on it, it just forwards the traffic and that is the kind of magic provided by Kubernetes.

As said earlier with externalTrafficPolicy set to local we get the HealthCheck NodePort which ensures that only nodes that running the Ambassador pod are served by the Azure Load Balancer. The advantage beside preserving the client IP address is that we do not have any additional network hop compared to the standard configuration. Nodes not running an Ambassador pod are then marked as unhealthy in the Azure Load Balancer backend pool and are not serving any traffic for the Ambassador API gateway deployment.

Here are some Azure diagnostic log entries showing the different states.

First a scenario where three Ambassador pods are running across all three nodes in the Azure Kubernetes Service cluster. Cause we do not have a degradation of our Load Balancer backend pool there will not be any entries in the diagnostic log.

Now we are scaling down the Ambassador deployment to two replicas and we get the following entry in the diagnostic log.

{ "time": "2019-04-04T12:33:47.0641700Z", "systemId": "REDACTED", "category": "LoadBalancerProbeHealthStatus", "resourceId": "REDACTED", "operationName": "LoadBalancerProbeHealthStatus", "properties": {"publicIpAddress":"40.69.29.19","port":80,"totalDipCount":3,"dipDownCount":1,"healthPercentage":66.666667}}

This entry shows us that the Azure Load Balancer sets the status of the corresponding node in the backend pool to unhealthy. When scaling back to three replicas the node will be marked again as healthy and continues to serve traffic.

{ "time": "2019-04-04T13:07:34.3877875Z", "systemId": "REDACTED", "category": "LoadBalancerProbeHealthStatus", "resourceId": "REDACTED", "operationName": "LoadBalancerProbeHealthStatus", "properties": {"publicIpAddress":"40.69.29.19","port":80,"totalDipCount":3,"dipDownCount":0,"healthPercentage":100.000000}}

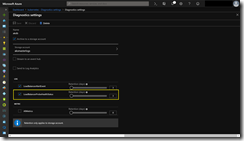

Azure diagnostic logs for the Azure Load Balancer are not enabled by default. If you want to turn them on just follow this guide

-> https://docs.microsoft.com/en-us/azure/load-balancer/load-balancer-monitor-log

Make sure you select LoadBalancerProbeHealthStatus as seen in the screenshot.