In today’s blog post we have a look at the Kubernetes credential plugin kubelogin for Azure Kubernetes Service and how to use it with Terraform.

-> https://github.com/Azure/kubelogin

-> https://kubernetes.io/docs/reference/access-authn-authz/authentication/#client-go-credential-plugins

The Azure Kubernetes Service cluster I am using for demonstration is an AKS-managed Azure Active Directory one with local accounts disabled. Disabling the local accounts turns off the admin credential endpoint and requires using an Azure Active Directory user or service principal for authentication and accessing the Kubernetes cluster. In our case, we use an Azure Active Directory service principal.

Setup Terraform Kubernetes Provider

Our first step when we want to use kubelogin with Terraform is the correct configuration of the Terraform Kubernetes Provider.

-> https://registry.terraform.io/providers/hashicorp/kubernetes/latest/docs#exec-plugins

Using the exec plugin for authentication requires the parameters host, cluster_ca_certificate, and the exec block.

...

provider "kubernetes" {

host = data.azurerm_kubernetes_cluster.aks.kube_config[0].host

cluster_ca_certificate = base64decode(

data.azurerm_kubernetes_cluster.aks.kube_config[0].cluster_ca_certificate,

)

exec {

...

}

}

...

Both parameter values are retrieved via an Azure Kubernetes Service data source. The exec block requires three parameters api_version, command, and args.

...

exec {

api_version = "client.authentication.k8s.io/v1beta1"

command = "./modules/exec/kubelogin"

args = [

"get-token",

"--login",

"spn",

"--environment",

"AzurePublicCloud",

"--tenant-id",

data.azurerm_kubernetes_cluster.aks.azure_active_directory_role_based_access_control[0].tenant_id,

"--server-id",

data.azuread_service_principal.aks.application_id,

"--client-id",

data.azurerm_key_vault_secret.id.value,

"--client-secret",

data.azurerm_key_vault_secret.secret.value

]

}

...

Currently, the API version that works with kubelogin and an Azure Kubernetes Service cluster is client.authentication.k8s.io/v1beta1. The API version client.authentication.k8s.io/v1 does not work and you receive the following error message when you use it.

Error: Get "https://azure-kubernetes-service-2d7111d0.hcp.northeurope.azmk8s.io:443/api/v1/namespaces/kube-system": getting credentials: exec plugin is configured to use API version client.authentication.k8s.io/v1, plugin returned version client.authentication.k8s.io/v1beta1

The command parameter defines the kubelogin executable or the path to the executable. We cover that later in this post.

With the args parameter, you define all the options of the kubelogin executable you want to use.

As mentioned at the beginning of the blog post we use an Azure Active Directory service principal for accessing the Azure Kubernetes Service cluster. Using the exec plugin, the Terraform Kubernetes provider uses a token for the authentication against the Kubernetes cluster.

Therefore, we define the arguments get-token and –login spn. Furthermore, the arguments, –tenant-id, –server-id, –client-id, and –client-secret are required when using an Azure Active Directory service principal.

The tenant id is retrieved again via the Azure Kubernetes Service data source. Keeping the Azure Active Directory service principal credentials secure they are stored in an Azure Key Vault and are retrieved via the respective data sources.

...

data "azurerm_key_vault" "kv" {

name = "azure-key-vault"

resource_group_name = "azure-key-vault"

}

data "azurerm_key_vault_secret" "id" {

key_vault_id = data.azurerm_key_vault.kv.id

name = "kubernetes-id"

}

data "azurerm_key_vault_secret" "secret" {

key_vault_id = data.azurerm_key_vault.kv.id

name = "kubernetes-secret"

}

...

When you use an Azure Kubernetes Service cluster with AKS-managed Azure Active Directory the –server-id value is the application id of the Microsoft-managed enterprise application named Azure Kubernetes Service AAD Server.

Instead of copy and paste the application id from the Azure portal into the provider configuration we use an Azure Active Directory service principal data source for it.

...

data "azuread_service_principal" "aks" {

display_name = "Azure Kubernetes Service AAD Server"

}

...

Command parameter – exec block

Depending on how you provision your Terraform infrastructure as code definitions it is enough to just provide the name of the executable for the command parameter.

...

command = "kubelogin"

...

This configuration requires that the kubelogin executable is available in a folder specified in the PATH variable on your CI/CD system. Assuming your CI/CD system allows the installation of additional software.

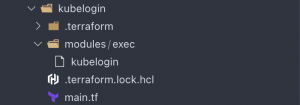

When the CI/CD system does not allow the installation of additional software such as Terraform Cloud using the Terraform worker you must include the kubelogin executable in the same folder or a subfolder of your infrastructure as code definition.

In the screenshot, you see that the kubelogin executable is placed in a modules subfolder called exec. The command parameter then defines the path to the executable.

...

command = "./modules/exec/kubelogin"

...

When you include the kubelogin executable in your infrastructure as code definition folder it is sent as part of the build context to the Terraform worker. That is the only way to make the executable available in CI/CD system where you cannot install additional software.

Deploy to Azure Kubernetes Service

After the correct Terraform Kubernetes provider configuration, we deploy to an Azure Kubernetes Service cluster.

...

data "kubernetes_namespace" "kube_system" {

metadata {

name = "kube-system"

}

}

...

Looking at the code sample above we do not deploy anything in this example to the Azure Kubernetes Service cluster. We use the kubernetes_namespace data source and define outputs for the namespace uid and name. A terraform plan is enough after a terraform init to test the interaction between Terraform and the Azure Kubernetes Service cluster using the Kubernetes credential plugin kubelogin.

> terraform plan data.azuread_service_principal.aks: Reading... data.azuread_service_principal.aks: Read complete after 0s [id=ca04bc23-9139-4c9f-b802-3441f5695d08] data.azurerm_key_vault.kv: Reading... data.azurerm_kubernetes_cluster.aks: Reading... data.azurerm_key_vault.kv: Read complete after 1s [id=/subscriptions/00000000-0000-0000-0000-000000000000/resourceGroups/azure-key-vault/providers/Microsoft.KeyVault/vaults/azure-key-vault] data.azurerm_key_vault_secret.secret: Reading... data.azurerm_key_vault_secret.id: Reading... data.azurerm_key_vault_secret.secret: Read complete after 0s [id=https://azure-key-vault.vault.azure.net/secrets/kubernetes-secret/979932e22abd4311833ee0be8a3fa4c3] data.azurerm_key_vault_secret.id: Read complete after 0s [id=https://azure-key-vault.vault.azure.net/secrets/kubernetes-id/ef2329ebda2b4949934ab54c6218f1e5] data.azurerm_kubernetes_cluster.aks: Read complete after 1s [id=/subscriptions/00000000-0000-0000-0000-000000000000/resourceGroups/azure-kubernetes-service/providers/Microsoft.ContainerService/managedClusters/azure-kubernetes-service] data.kubernetes_namespace.kube_system: Reading... data.kubernetes_namespace.kube_system: Read complete after 0s [id=kube-system] Changes to Outputs: + namespace_name = "kube-system" + namespace_uid = "dc4273ac-d382-4f36-a02e-7ee4231d874d" You can apply this plan to save these new output values to the Terraform state, without changing any real infrastructure.

Taking a look at the output we see that everything works as expected.

Summary

It is a bit tricky to get the Terraform Kubernetes provider configuration working when using a Kubernetes credential plugin. The documentation covers the use case briefly and the only example is for Amazon Web Services (AWS). But it is worthwhile to invest the time to get it working.

You find my Terraform configuration example in my GitHub repository.

-> https://github.com/neumanndaniel/terraform/tree/master/modules/kubelogin